A Guide to Video & Audio for Microsoft Windows

A Guide to Video & Audio for Microsoft Windows

What is this? Online video is booming and more dads become hobby ???Spielbergs??? every year. Dropping prices and increasing power and capability of video recording, playing and transmitting equipment is also doing his part to aid this trend.

Chances are good that it happened to you that you received a media file, audio or video from somewhere or someone that you wanted to play back on your computer, but couldn???t. Your friend opened it without problems, but you are getting a cryptic and technical error message instead, with no real solution offered to solve the problem.

If you started doing editing of videos and published your creations on CD, DVD, online or elsewhere, the problems encountered related to compatibility issues of your video and audio data that you are working on, probably increased exponentially compared to the time when you just consumed media.

That was at least what happened to me.

I found myself spending more and more time on solving those issues and less and less time on actually editing and publishing videos. I had to learn more about the subject than I ever wanted to, but I was kind of forced to deal with it anyway. I collected a lot of resources and experiences over the past months and even years and sat down one day to work on a consolidated article, post, guide or whatever you want to call it. Well, here is the result of this work that I actually enjoy: writing (and talking hehe).

I hope that this article and the provided information and resources will help you to solve some of the issues faster than I did and to get a general understanding of the subject in a much shorter period of time. I found many great resources, but none that is like mine. The resources that I found were often very detailed and very much technical, talking about my problems, but providing answers that I did not understand and asked me to do decisions for things where I did not understand the consequences of each choice that I could pick from. I pretty much had to rely on instinct, luck and trial and error, which is not a great way of fixing Windows issues efficiently and absolute.

I try to be more generic and only in a few cases specific, where I think I have to, but in the hope that I already provided enough general information that you got a pretty good idea of what the details mean once we get to them.

Feel free to use the comments to provide feedback, suggestions, praise or ask questions.

Thanks and Cheers!

Carsten aka Roy/SAC

The Containers – Media Files

A video container contains the video images, the audio and any additional features and content for the presentation of what normal people call ???a video???. The additional stuff is often not noticed or believed by many, not part of the video files themselves.

Those things include ???closed captions??? or ???sub titles???, ???chapter indexes???, ???Menu???s??? like DVD menus and ???Metadata??? like ???Genre??? tags, ???Description???, ???Copyright???, ???Publisher???, ???Author???, ???Ratings??? etc.

The containers are represented as video files with specific file extensions that helps (more the) users and (less so) the computer to be able to identify the different containers, used for a particular ???video???.

??

| Container | Name | Parent | Company/Consortium |

| 3GP/3GP2 | 3rd Generation Mobile | MP4 | 3GPP/Mpeg |

| AVI | Audio Video Interleave | AVI | Microsoft |

| ASF | Advanced Streaming/System Format | ?? | Microsoft |

| DIVX | DivX Media Format | ?? | DivX |

| DV | Digital Video | ?? | Sony, JVC, Panasonic |

| DVR-MS | Microsoft Digital Video Recording | ?? | Microsoft |

| EVO | Enhanced VOB | VOB | Mpeg |

| FLV | Flash Video | ?? | Apple |

| M2TS/MTS | MPEG-2 Transport Stream 192 B | ?? | Mpeg |

| MCF | BSD/GNU Container | ?? | BSD/GNU |

| MKV/MKA | Matroska Video/Audio | ?? | Public Domain |

| MP4 | MPEG-4 | ?? | Mpeg |

| MPG/MPEG | MPEG Program Stream | ?? | Mpeg |

| MOV/QT | Apple QuickTime Movie | ?? | Apple |

| NUT | NUT Project/GPL | ?? | GPL |

| OGM/OGG | Ogg Media File | ?? | Xiph.org |

| PS | MPEG-2 Program Stream | MP4 | Mpeg |

| RAT | ratDVD (based on VOB) | VOB | GPL |

| PVR/DVR |

MPEG-2 Transport Stream 188 B, DVR (Digital Video Recorder) PVR (Personal Video Recorder) |

Mpeg | |

| RIFF | Resource Interchange File Format (AVI) | AVI | Microsoft/IBM |

| RM/RM VB | Real Media – Variable Bitrate | ?? | Real Networks |

| TS/TP/TRP | MPEG-2 Transport Stream 188 B | MP4 | Mpeg |

| VOB | Video Object | ?? | Mpeg |

| WMA | Windows Media Audio | ASF | Microsoft |

| WMV | Windows Media Video | ASF | Microsoft |

| Container | Video Codecs | Audio Codecs |

| 3GP/3GP2 | H.263, H.264, MPEG-4 | AMR-NB/WB, (HE-)AAC |

| AVI | MPEG-4, MJPEG, DV, Cinepak, Indeo | MP3, MP2, AD(PCM), AC3 |

| ASF | Windows Media Video, VC-1, MS MPEG4 v3 | Windows Media Audio |

| DIVX | DivX | MP3, AC3, PCM |

| DV | DV | PCM |

| DVR-MS | MPEG-2 | MP2, AC3 |

| EVO | H.264, VC-1, MPEG-2 | (E)AC3, DTS(HD), PCM |

| FLV | H.263, H.264, VP6 | MP3, AAC, ADPCM |

| M2TS/MTS | H.264, VC-1, MPEG-2 | (E)AC3, DTS(HD), PCM |

| MCF | various | various |

| MKV/MKA | H.264, MPEG-4 | MP3, AC3 |

| MP4 | MPEG-4, H.264 | AAC |

| MPG/MPEG | MPEG-1, MPEG-2 | MP2 |

| MOV/QT | H.264, MPEG-4, MPEG-1, MJPEG, Sorenson Video | MP3, AC3, PCM |

| NUT | Anything | Anything |

| PS | MPEG-2 | MP2, AC3, DTS, PCM |

| OGM/OGG | Ogg Theora, Xvid | Ogg Vorbis, MP3, AC3 |

| PVR/VDR | MPEG-2, H.264 | MP2, AC3 |

| RAT (ratDVD) | XEB | AC3, DTS, PCM, MP2 |

| RIFF | ANI, MPEG-4, MJPEG, DV, Cinepak, Indeo | WAV |

| RM/RM VB | Real Video | Real Audio, AAC |

| TS/TP/TRP | MPEG-2, H.264 | MP2, AC3 |

| VOB | MPEG-2 | AC3, DTS, PCM, MP2 |

| WMA | – | Windows Media Audio |

| WMV | Windows Media Video, VC-1 | Windows Media Audio |

Pretty much all containers support directly or via support tools variable audio bit-rates (VBR), variable video frame-rates (VFR) and b-frames.

FLV, MPG/MPEG (and TS) do not support chapters, those containers also do not support subtitles (or only very poorly via workarounds)

Wikipedia ??? Comparison of Container Formats

The Components

File Sources

Now I mentioned already that a container holds all the pieces together that we call a ???video???. Now we have to start taking them apart and enter the dark side of video format land (slowly).

A file source is a special software component that is able to open one or multiple of the mentioned video containers and get them ready to be processed. It can read the header information of the container and check if it makes sense and what criteria the next components would have to meet to be able to process this media file. You already learned that many containers can handle multiple video and also multiple audio encoding formats.??

Let me use a modified version of a classic saying: “Not all .AVI files are made equal”. There is usually not just ONE component that can handle ALL of the video formats an AVI container supports. Also, the container contains VIDEO and AUDIO data, which are two entirely different things (technically) that will have to be processed separately. They must be separated, split from one another, which is also referred to as demuxing.

Muxer, Demuxer (Splitter)

Let???s start it easy and separate just the Audio, Video.

Several of the media containers also support other types of data that are related to the video and/or audio content. Also more than one instance of each media type is possible. A good example is the audio selection on a DVD. Most DVD movies offer a choice of spoken languages and sound quality, such as Stereo, Surround Sound and Dolby Digital. Also available are typically a set of different languages for the sub titles or closed captions for the hearing impaired folks out there.

Captions/Sub Titles, Meta Data and Menu???s are still not unimportant, but less of a problem in my opinion. So I decided to move them to the end. Hey, that does not make me intolerant towards people who are impaired in their hearing. I am a collector junkie myself and love Meta Data.

The file source component that I mentioned before is often part of a splitter/demuxer, which does make sense. A file source already has to know the format of the container. It’s from there only one more logical step to take the parts of the media content in the container and pass them over to the right next component to process it.??

The reversed process of the splitting of the content in a media container (demuxing) is the combining of the various parts that need to go into a container and wrap them together to generate a single file that has all the video and audio data etc. in it. This process is being called “muxing” and the component that does this is referred to as a Muxer. The muxer receives the data from the counter part where the demuxer passes the different data streams to.One gets the audio to deal with, the other one the video and maybe another one to take care of the closed captions. The Demuxer passes the data to a “Decoder”, while the “Muxer” would receive its data from an “Encoder”. An Encoder/Decoder or both is referred to as a Codec.

Codec

The word codec is short for compressor/decompressor but also referred to as encoder or decoder.

Codecs are in many cases not bound to a specific container, which does not make things easier and only more confusing, especially, if you consider the amount of Codecs and their variations that exist. A codec can be a hardware or software component, but in our case are only the software Codecs of importance.

That component can compress and/or decompress video using a particular compression algorithm.

Since all those Codecs and containers must be defined somewhere that the computer system and software can find out what it needs in order to provide/play back all the elements that make up your ???video???. You probably noticed all the ???aka??? and ???/??? usages for the same or very similar thing. It???s a mess!

The codec tweaking tool GSpot Version 2.7 knows about 749 different FourCC video Codecs and 247 different audio compression Codecs. That gives you an idea about the scope of the problem. This does not even include any special filters, splitters, file sources; renderers and all that other stuff that is part of the system, playing a role in the process of bringing up the video from a file on your screen and the audio out of your speakers.

FourCC

To help the computer a little bit with making sense out of things, a system was devised that is called FourCC or 4CC, which means literally ???four character codes???. The folks from the video game development company Electronic Arts introduced what is considered the father of the FourCC concept, called IFF (Standard for Interchange Format Files) in 1985 for the Commodore Amiga line of computers (???EA IFF 85???).

A simplified definition for 4cc could be: Every media component format will be assigned a unique 4 character code, which will be included in the media data itself, that apps and systems can look at a specific place in the media data to find out what format the data is in. It can then simply check, if it has the information for the format that is labeled with that 4 character code or not. If it does not, it will return an error and tell the system or user that it requires the specifications for that format to understand the structure and layout of the data in order to be able to process them properly (e.g. show images from your last vacation instead of a bunch of colored dots that look more like works by Escher).

A good list with Codecs, their FourCC designation, company name and website URL is available at FOURCC.ORG.??

The words ???Filter??? and ???Codec??? are unfortunately often used beyond their actual meaning, which only adds to the whole confusion even more. A ???DirectShow Filter??? might be a Codec, a file source, a splitter, a muxer, a renderer or maybe actually just a filter. The term Codec might be used in the same fashion, but usually implies that the actual encoding and/or decoding piece, is at least part of the software package. For example, if you download the DivX Codec from the DivX website, you get more than just the encoder or decoder, even more than the stuff that is needed by Windows to encode and create or decode and render videos using the DivX video compression algorithm. It comes with players, a converter, statistics and configuration tools along with it.

Why Do We Need All This in the First Place?

Video data are nice, but also have a flaw: they are huge and eat up a lot of space, so much space that it is even with today???s low hard disk cost and higher available bandwidth not feasible to work with raw video data all the time. Codecs reduce the size of video and audio in a flick significantly. There are a number of different algorithms out there and used for the compression, each with their own pros and cons. Some compress higher with less loss of quality, but suck up a lot more resources and demand much more powerful hardware to decode the flicks again for presentation that not all hard and software is able to support them yet, compared to other Codecs, that might not be as good with compression and loss of quality, but are faster and require much less computing power to do something with the crunched data files.

But it is also true that competition, patents and commercial interests play a significant part in this game as well. It does not have to be that messy as it is today.

Most popular Codecs on the Internet

(based on Codecs Database)

I will not mention every of the over 1000 or so video and audio codecs that exist. I will reduce it to one a few that are popular online and offline and cover well over 90% of the entire spectrum.??

Some Video Codecs

MPEG-1/MPEG-2

The video codecs used for standard DVD Video and VCD/SVCD. Typical file formats have the extensions: .VOB

or .MPG/.MPEG.

MPEG-4 Part 2

Most .AVI?? files on the internet are using one of the following two video codecs, the commercial DivX video codec (4CC codes: DIVX, DX50, DIV3, DIV4 and DIV5)?? and the open source Xvid codec (4CC: XVID, sometimes also xvid or Xvid, which should not but unfortunately does matter some times, if a software component is case-sensitive. I bitched about that elsewhere already).

Xvid is able to process many of the different versions of DivX, except for the really early ones

MPEG-4 AVC (Advanced Video Coding)

.MP4 files and newer Apple QuickTime .MOV files (4CC: AVC1). You can rename a MOV file to MP4 for example, if a program causes trouble when you want to load a MOV file with it and it might does the trick for you.?? Typical are H.264 format based codecs, like the open source X.264 codec?? (4CC: X264) or the commercial Dicas H.264 codec (4CC: DAVC)

QuickTime Movies

.MOV (also .QT) files from Apple. The latest versions use the H.264 format, but Apple used in the past a propriatory format (4CC: SV10) and then versions of the Sorenson Video Quantizer?? video codec (4CC: SVQ1 or SVQ3), with it’s version 3 being already a variation of the H.264 format to make it the next logical step?? for Apple and it’s QuickTime product to use a standard video format and not continue with its own.

Flash Video

Probably mostly because of the popularity of YouTube who uses Flash Video for showing movies on its social video sharing platform and the general popularity of using Flash for video on the Web, .FLV transformed from exotic video format to a format most internet users are familiar with.

Flash 7 uses the Sorenson Spark video codec (4CC: SPRK) and Flash 8 the On2 VP6 codec (4CC: VP60)

Windows Media

Microsoft developed their own video and audio codecs, which are known as Windows Media Video and Windows Media Audio. They are used in the media containers WMV and WMA and ASF for streaming.??

Real Media

Like Microsoft, Real Network, the giant content portal on the Internet, developed their own video and audio codecs (4CCs: RV20, RV30, RV40). The .RM (real video) and .RA (real audio) file format is still the primary format for Real Networks Real Player. Real Video became popular because it was one of the first video formats that could be streamed over the Internet.

Some Audio Codecs

MPEG-1 Layer 3

Simply known as MP3 is the most known and used audio format today. Part of the credits for making it as popular as it is today would probably have to go to the former file sharing peer-to-peer network Napster and of course to Apple iTunes and their iPod portable MP3 audio player. It was developed by the Frauenhofer Institute in Germany and also made head-line new with it’s patent disputes and lawsuits in the early 1990s.

(AD)PCM

Raw WAV files are usually encoded in PCM format.??

AC3

Better known as Dolby Digital (5.1). The popular sound format used on many DVD movies for high quality surround sound at home and at movie theaters.??

MPEG Audio (AAC)

AAC stands for Advanced Audio Coding and was meant to be the successor of MP3. It is today in wide spread use, for example in iPhones, iPods (and iTunes now) or the Sony Playstation 3 game console.

DTS (AC)

You probably know DTS from the sound options of many DVDs. DTS is a common alternative audio format, competing with Dolby Digital. DTS stands for Digital Theatre System -?? Coherent Acoustics if spelled out. Similar to Dolby Digital 5.1, but some say that it is slightly better that 5.1. Mh. :)??

I mentioned Windows Media Audio already.??

Filters

There are multiple meaning of the word “filter” in video land. In DirectShow (see further down below), every component is called a “Filter”, independently from it’s function and purpose. So a DirectShow Filter could be a File Source, a Splitter, Encoder/Decoder (Codec), Renderer or actually a Filter in the true sense of the word.

A Filter in traditional terms is a component that alters the video or audio stream. Classic filters that you probably used yourself every time when you edited a video, sound or picture, without knowing that you used one. PhotoShop users will smile now, because they should have a pretty good idea of what is coming now.

If you resize a video for example, or change it’s contrast, brightness, saturation, hue, color encoding, number of colors, or turn color video images to gray-scaled ones, deformations, slicers, blenders, mixers, volume adjustments, normalizers etc. etc. All those functions are performed by Filters. Something goes in, gets manipulated and then comes out and hopefully enhanced on the original output as we wanted it to do.

Renderers

Renders are responsible for the output of the video and/or audio stream data to a device that is capable of displaying or playing back the content, such as the video card that then renders the images on to your computer screen or display or to the sound card, which feeds your side speakers or your receiver and then out of your high-end speaker system that you can listen to the sounds and voices of the audio or video file.

Streaming

Streaming is only a special form of rendering that requires that the video data are rendered fast enough to allow the play back of the audio or video in real time, without the need to download the whole video or audio before I play it back. You might think that this is the normal way of doing it, but if you ever converted a video to a DVD or a DVD movie (e.g. MPEG-2/PCM) to a MP4 video file (e.g. H.264/AC3) for example then you probably know that this process takes still longer than the video material itself is long. A 90 minutes movie takes usually more than 90 minutes to re-code/convert from one media type/format to another. The conversion of a video file to HD DVD (such as Blu Ray) can take many hours or days, depending on the hardware equipment used for the conversion.

That is not an option for streaming, especially if the video is streamed while the content is being generated/recorded, such as a Live Event Broad Cast. You don’t want to wait to see today???s ball game tomorrow, because it takes a day to recode the video images that you can watch it on your computer or TV, right?

Not all video formats can be streamed and the ones that can, need to be transmitted to the receiver in an optimized way to ensure that the video stream is constant to guarantee fluid video images and flawless sound. For the transmission of the video and audio data are different streaming protocols used, like RTSP, PNM, MMS?? or HTTP.

Components Wrap Up

Now you have all those components and elements in the process and all these different methods and formats where non of them just by itself is anything but easy to do. It is already hard enough to get all those things work together in unison and as a whole that the least thing you would want to do is adding another layer of complexity to it and makes things even more complex than they already are, right? Yeah, sure, common sense tells you that this is probably a good idea, but since when was common sense applied to the real world? Think about it!

DirectShow (DS) – Filters, Video for Windows (VfW)

Now Microsoft thought that it could make things even messier??? Aehm, I mean improve on them. But I will repeat what they say themselves in the introduction to their ???Microsoft DirectShow 9.0 System Overview??? at MSDN.Microsoft.com.

The Challenge of Multimedia

Working with multimedia presents several major challenges:

Multimedia streams contain large amounts of data, which must be processed very quickly.

Audio and video must be synchronized so that it starts and stops at the same time, and plays at the same rate.

Data can come from many sources, including local files, computer networks, television broadcasts, and video cameras.

Data comes in a variety of formats, such as Audio-Video Interleaved (AVI), Advanced Streaming Format (ASF), Motion Picture Experts Group (MPEG), and Digital Video (DV).

The programmer does not know in advance what hardware devices will be present on the end-user’s system.

The DirectShow Solution

DirectShow is designed to address each of these challenges. Its main design goal is to simplify the task of creating digital media applications on the Windows?? platform, by isolating applications from the complexities of data transports, hardware differences, and synchronization.

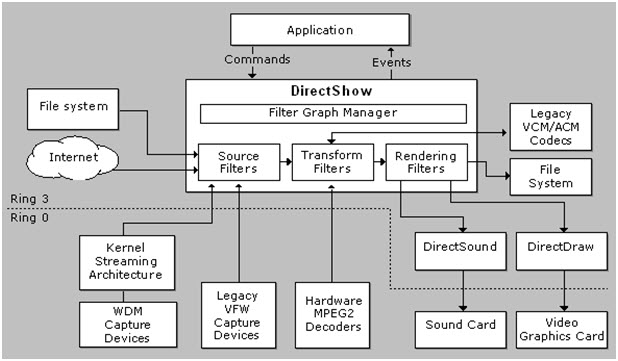

To achieve the throughput needed to stream video and audio, DirectShow uses DirectDraw?? and DirectSound?? whenever possible. These technologies render data efficiently to the user’s sound and graphics cards. DirectShow synchronizes playback by encapsulating media data in time-stamped samples. To handle the variety of sources, formats, and hardware devices that are possible, DirectShow uses a modular architecture, in which the application mixes and matches different software components called filters.

DirectShow provides filters that support capture and tuning devices based on the Windows Driver Model (WDM), as well as filters that support legacy Video for Windows (VfW) capture cards, and codecs written for the Audio Compression Manager (ACM) and Video Compression Manager (VCM) interfaces.

The following diagram shows the relationship between an application, the DirectShow components, and some of the hardware and software components that DirectShow supports.

The ???simple??? concept of DirectShow by Microsoft illustrated

The DirectShow API media-streaming architecture was introduced with the DirectX SDK for 32Bit Windows operating systems. If you remember Windows 95 and 98 and played some games on it, then you will remember that DirectX was always required and the minimum version needed to run the game was even shipped with the setup CD-Rom???s for the games and part of the setup process.

The DirectX SDK kind of died as a package with versions etc. The last DirectX version released was a DirectX 9.0 update from February 2005. DirectShow was moved to the Windows SDK. Windows 2003 Server and Vista are the first systems where only the Windows SDK is used and no DirectX anymore.

DirectX was the successor of Video for Windows (VFW), which was introduced in 16-bit Windows (Windows 3.0, 3.1 and Windows for Workgroups). For backwards compatibility reasons to be able to run 16-bit apps under 32-bit Windows, do the 32-bit OS versions of Microsoft support VFV.

But, they did not leave VFV completely alone. Starting with DirectX, many of the VFV features were suppressed, causing incompatibility issues for some 16-bit video applications, if used in Windows 32-bit. They launch and kind of work, but at the same time not really, at least not as expected and most of the time wanted.

Getting your computer to process any type of video with the application that uses DirectShow or VFV does not make things work for applications, which uses the other model. This can get funny and made me scratch my head more than once. Phenomena like player X can play back a video in format Y after you installed an add-on (probably a Codec), but player Z remains unable to do anything with that video.

One uses VFW and the other DS. Both are separate frameworks and each must be supported specifically by any component that is part of your video experience. Some applications support both, but most use either one or the other only.

Because of this mess, do some applications do not use either of those systems and use their own system to process video data. Those applications are limited to what they come with and support themselves. Some allow third parties to create plug-ins to support additional Codecs and file formats. Nullsoft???s Winamp Player would be a popular example for this approach.

DirectShow is like Lego with all the same great attributes and possibilities and virtually the same flaws as well, because of these great possibilities. If you ever owned Lego and played with it, chances are that you owned more than one set. You probably also mixed the parts of different sets and did not keep them separate. With the time became it harder to get original sets together again, because the manual got lost and you had to start guessing which part goes were and was part of a specific set or not. Also parts got lost some times. You had to improvise, and substitute things, which was only possible to a limited amount, because you had to make sure that the parts fit.

The process is virtually the same with DirectShow. A set would be a video file for example that you want to play back with a DirectShow enabled video player, such as Windows Media Player. The components of the set are actually mini-sub-sets itself, like a set in Lego that was part of a theme, e.g. modern city or the knights theme. Imagine having other players coming and using your sets and/or bring some new sets or even modify some of the sets that you already built, because they did not like the way you did it.

Those other players can be any video related software (big app to small tool) that is using DirectShow. Even a small and not very important tool that you want to use for splitting a video into multiple pieces for example, might messes things up or cause the system do things different that it used to before. Stuff is most of the time changed without warning.

I do not have an answer to the problem, that you cannot sit down, make everything work the way you like and then keep it forever. I can only say that if you got everything to work how you need it, be careful what software you install in the future and leave settings related to video and audio alone.

And to make thinks even more interesting for the future, Microsoft decided to introduce yet another system to the mix with their upcoming operation system version dubbed Windows 7 with the bright and shiny name:

Windows Media Foundation. “It will make everything better, promise”. It sure will. The only question remains is for whom it does. Mhh?!

Video Tools

Splitter/Joiner

There are also video and audio file splitter tools that do what their name implies. They let you slice up a video or audio file into multiple pieces (splitter) or combine multiple video or audio files into a single file (joiner). You cannot simply cut a video or audio file into pieces or copy different media files together (like with the binary copy command in DOS).

Media files have a header, data at the beginning of the file, which are crucial that media players are able to play them. A missing or corrupt header can make the entire media file unusable und unplayable. If you simply cut a file in half, then you would end up with one file that has now incorrect information about the media file and a second one with no information at all. Individual video frames also have multiple components and a header. If it is a compressed video file (what typically all video files that are used by regular users are), then it is even more complicated, because some frames are needed to render a bunch of other frames, so called key frames.

Cutting a file into pieces without considering all this factors would be like going to a butcher and have him cut the next best piece of a cow when you order X pounds of steak. No pretty picture, but I hope that you get the idea.

Cutting a media file into pieces is generally easier than joining them together (as with almost everything else in life, taking things apart is easier than putting them back together). As long as the Cutter tool knows the file format structure and knows where to cut and to rebuild the file header, things will be fine. If they are not recoding the slices in the process, cutting is also done pretty fast.

Joining files is tougher, unless you want to re-join the pieces that you just created with your cutter. This is rarely the case though. If you only want to slice a media file up for storage and transportation, because the media file is too large, then you should not use a media cutting tool in the first place. Breaking it up with your backup software or with tools like ZIP, RAR, ACE, HJSplit or MasterSplitter will do just fine.

Simpler joiner tools are only able to combine media files that have exactly the same format. When I say exactly, then I mean more than just having the same file extension. The to-be-joined files must be compressed with the same video and audio Codecs and also settings that determine the video and audio quality, such as Bit Rate, Frame Rate, Sampling Rate and Resolution have to be identical. If this is the case, the joiner only has to re-create the information and not to re-encode any audio or video data.

More sophisticated joiners are a bit more flexible and will make adjustments to the source media in order to be able to join them. Some even re-encode the whole video and audio and work like converter, compositing and rendering tool all-in-one. If the joiner has to re-encode anything, then the process can take considerably more time, but it provides the most flexibility regarding the video and audio sources that can be combined together, even allowing the joining of media in entirely different formats.

To join .AVI files that are in the same format, you can use the free tool VirtualDub. You can also use free compositing tools like Windows Moviemaker (also free), or professional software, like TechSmith Camtasia Studio, Adobe Premiere, Pinnacle Avid, Ulead Video Studio (now Corel) etc., but there you have to re-encode the results every time, regardless if it would be necessary or not.

There are many commercial tools available. I can recommend the Splitter and Joiner tools from Boilsoft, because they are very universal and support various video file formats, including .AVI, .WMV, .ASF, .RM and .MPG. They cost about $50 together and you can test them free for a few days before you have to decide whether you want to buy them or not.

The Video Joiner by ImToo is more flexible and re-codes the source video files to the format that you select and only cost $19, with a free trial period to test it yourself.

Resources

The drops of water on the hot stones in the video & audio encoding and decoding desert. Some drops might vaporize away without quenching your thirst, while others might provide just the little bit of water that you need to survive another day in this desert. 🙂

Codec Packs

It became clear to geeks and people with a great deal of knowledge and understanding of the subject matter that this is not something that you can expect from a normal user to deal with. A large number of people would probably not understand it and an even larger number of people do not even want to understand it to begin with. I belong to the second group that is not a part of the intersecting set with the first group. I just want to watch and edit a movie, that???s it.

The market failed, the competition lead to incompatibility issues and conflicts and the need to spend the equivalent of a college education in time and resources in order to be able to talk the talk and blame it on the other party.

Ordinary people stepped up and developed tools and build packages, most of the time absolutely free, that other people can download and install, to make many of the things work that usually do not, or at least make the problems smaller and easier to deal with.

They are called Codec Packs and are a collection of containers, Codecs, filters and tools that are pre-configured to work together and as many as possible components compatible to each other.

The problem is that Codec Packs, if not done right, can help making things worse. Some packs also think that doing their own thing, ignoring any other standards and best practices, creating their own little island is the solution to the problem. This lead to hate and love that you will get exposed to, if you are doing some of your own research on this subject. Some despise them, some hate them, others love them and calling them saviors.??

To sum it all up, Codec Packs might not a quick and general applicable solution to a wide spread problem as they tried to went out to become. From what I heard from “experts”, other geeks and based on some experiences that I made myself with those packs, there are only a few that I would recommend.??

Some of the biggest and popular Codec Packs include:

- K-Lite Codec Pack (KLCP) ??? provides different packs with different configurations for the different types of users out there. They also have pages with name, brief description and download link for circa 300 video and audio Codecs, filters, splitters, Muxers and De-Muxers etc.

- Combined Community Codec Pack (CCCP) is the pack that is praised the most (or hated the least) by people who call themselves expert in this subject. I was okay with it, but personally preferred K-Lite.

Other Codec Packs

Please use with Caution and check first, if they offer everything that you need, before you install them.

- Windows Essential Codec Pack (W.E.C.P.)??

- Cole2k Media – Codec Pack

- Gordian Knot Codec Pack

- MediaCoder Pack

- X-Codec Pack

Special Players and Codecs

- QuickTime Alternative

- RealPlayer Alternative

- nLite Windows Installation Customizer

- WMLite, TTPack and QTLite for light-weight versions of Windows Media Player, Apple QuickTime, Real Networks Real Player, FFdshow, Ogg and AC3

- Media Player Classic (MPC) and VobSub

- DirectShow FilterPack (DSFP)

I don’t use codec packs today anymore. I install and deal with codecs one-by-one. This is probably the best way to go in the long ground, once you are familiar with the various available options out there and know what you need for your own purposes. The needs of video creators and consumers are not alway the same. I am both and experienced this first hand myself.

Info and Help Resources to Audio & Video

- http://www.doom9.org/

- http://www.videohelp.com/

- http://www.free-codecs.com/

- http://www.codecguide.com/

Advanced: Tools you got to have

Only for the geeks out there, tools to tweak and troubleshoot codec and filter related issues and tools to fix them as well.

- Media Info Lite

- Graph Edit

- GraphEditPlus?? (commercial software, $60)

- GSpot Codec Appliance

- AVICodec??

- RadLight Filter Manager

- DirectShow Filter Manager

- Codec Tweak Tool (part of the K-Lite Codec Pack)??

- Sherlock – The Codec Detective

- WinXP Decoder Check?? (alternative download)

- VirtualDubMod

– DirectShow Input Driver for VirtualDub

– VirtualDub MPEG2?? - MP3Tag

- abcAVI

- aviUtl

Codec Lists and Databases

Acronyms and Definitions

Source: http://www.fourcc.org/

|

Acronym |

Definition |

|

ACM |

“ACM” files, also known as “Audio Converter Modules”, are audio codec drivers installed on your system which export methods that can be performed on a particular audio stream. This includes converting audio from one type of stream to another. An example of this is perhaps an MP3 ACM which could convert MP3 audio to PCM audio. |

|

ATSC |

Advanced Television Standards Committee. The technical group that defined the high definition TV standard for US terrestrial transmission. |

|

AVC |

Advanced Video Codec. Otherwise known as MPEG4, part 10 this is the codec that most of the worlds broadcasters are moving to for HD transmissions. |

|

AVI |

Audio Video Interleave – a Windows file format used to store movies. |

|

Codec |

CODEC stands for COmpressor/DECompressor – a software or hardware component that can compress and/or decompress video using a particular compression algorithm. Though CODECS are generally thought of in the context of video and audio, they are not limited to this scope. |

|

FOURCC |

Four Character Code – an 8 digit (or 4 ASCII character) value used to identify the pixel format or compression standard (codec) used to store images or video files. FOURCCs are also used for a similar purpose in some audio applications. |

|

HD |

High Definition. This refers to a video picture size higher than SD (see below) and typically one of the set of resolutions defined by ATSC such as 1080i (1920×1080), 720p (1280×720) or 480p (720×480). |

|

JPEG |

Joint Photographic Expert Group. The standards group that defined the hugely successful JPEG still image codec. |

|

MPEG |

Motion Pictures Expert Group. The clever people who brought you the entire ISO standard Codecs that are used in TV broadcast today. |

|

NTSC |

National Television Standards Committee. The group which defined the 480 (visible) line video standard used for analog and pre-HD digital TV transmissions in the USA. |

|

PCM |

PCM is Pulse Code Modulation. This is basically an audio format that is pretty much “raw” audio. This is generally the format that audio hardware directly interacts with. Though some hardware can directly play other formats, generally the software must convert any audio stream to PCM and then attempt to play it. An analog audio stream generally looks like a wave, with peaks and valleys that are called its amplitude. These are generally sent to the audio hardware and are then digitized. The conversion basically samples this data stream at a given frequency such as 8000Hz, etc. This sampling will generally measure the voltage passing through so many times a second and generate a value based upon this. Remember, Im not an audio engineer so this is just a simplistic way of thinking about this. The PCM format, for example, I believe the base line is either 127 or 128 (in 8 bits per sample). This is the middle, silence. Anything going below is a low tone and above is a higher tone. If you take a bunch of these values and play them at a certain speed, they will make a sound. |

|

RGB |

Red Green Blue – a method of describing colors commonly used in PC graphics. |

|

SD |

Standard Definition. The size of a typical video image for a “legacy” TV system. In the US, this will typically describe an image with 480 lines (and one of a number of widths, 720 being the maximum, 480, 640 and 704 being other common choices). In Europe (and other areas which use PAL TV standards), SD refers to an image with 576 lines of resolution. |

|

YCrCb |

Color format typically used in video processing. A color is defined in terms of a luminance (Y, brightness) value and two “color differences” or chrominance values (Cr and Cb). One correspondent indicates that Y, R and B likely refer to primary colors yellow, red and blue and “C” indicates a color difference. |

|

YIQ |

YIQ is another color format and another way of defining a color and is commonly used in NTSC video systems as far as I can remember. |

|

YUV |

YUV also describes a luminance/chrominance color model. This is frequently used interchangeably with YCrCb though, technically, they are different. |

Posted on: Saturday, May 9th, 2009 13:01

Posted on: Saturday, May 9th, 2009 13:01

Thanks a lot for this informative post!

Awesome article. Finally someone has taken the time to explain the various terms that you see on video forums (splitter, decoder, renderer, etc.).